Data Migration

Benefit from Effective People’s data migration approach, which is based on our experience of completing over 1,000 implementations

Data migration solutions are required when an organization needs to move data from one location, format, or application to another. Also, the implementation of Software as a Service (SaaS) to support an HR transformation strategy requires advanced data migration tools to ensure the safe transition of data to the new solution.

Effective People’s expertise in selecting, preparing, extracting, and transforming data and permanently transferring it to the cloud is based on proven migration methods and tools, combined with our experience in managing more than 1,000 successfully completed data migration projects for diverse clients.

Our Data Migration Approach

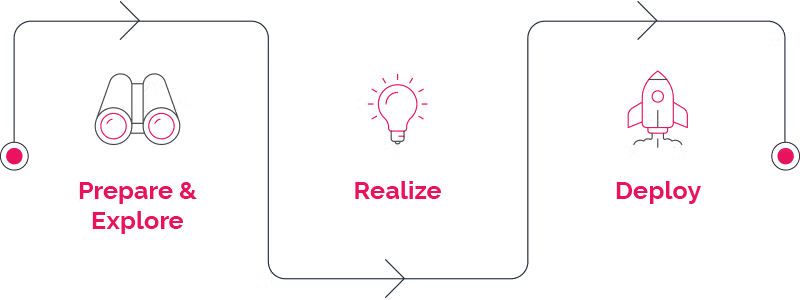

To ensure the best outcomes for your organization, it is critical that there is a clear and reliable plan to safely manage your data migration. Effective People’s approach can be grouped into three sequential components:

Phase 1: Prepare & Explore

The first step involves exploration to gain a unified view of an organization’s business processes, by identifying data and target entities and analyzing source systems. We engage with HR and IT departments to review major risk areas for data migration, such as localization requirements and organizational structure.

Phase 2: Realize

Based on our validation rules and engagement with local HR managers, our data migration consultants extract and convert data from source systems. We conduct migration workshops to ensure high-quality data migration, and to perform data quality assessments using our proven Key Performance Indicators (KPIs).

Phase 3: Deploy

In the deploy stage, our expert team loads the converted data into the new system using our proven approach, and conducts validation of key data, relationships, and data quality based on industry best practices, our pre-build checks, and reports.

Business Impact

Data migration solutions are an integral part of the HR transformation journey. Effective People’s extensive framework for risk mitigation and quality assurance can help you safely navigate your way through the complexities involved.

Contact us today

Get top performance from day one with the right data migration approach.